Today, as on the previous two occasions (the Tower of Hanoi and space partitioning puzzles), we will approach another exciting problem where the recursive strategy plays a significant role.

Josephus problem has a quite interesting historical background, but from the algorithmic perspective, it is a game of elimination. We have a given number of elements (people in the original story), and we eliminate (kill) them following a given and constant pattern. It could be every other element or every third that is eliminated from the game. Finally, we end up with one winning element (person), the last one (man) standing.

The elimination

Let’s define the specific game that we want to solve. Let’s suppose that we have n elements distributed regularly on a circle; it makes it easier to visualize and count out. They are numbered clockwise, and the last one is located next to the first one.

Now, we start the elimination procedure. Starting from number one, we eliminate every other element, so the first one lives, but the second one is eliminated, then the third one lives, and the fourth one is eliminated.

When an element is eliminated, it is no longer a playing element. So, when we run the whole circle and start the next loop, we consider numbers one and three next to each other. When the first one survives again, then the following one — the third, is eliminated. We follow the pattern until only one element is left, and this is our winning element, our winning position.

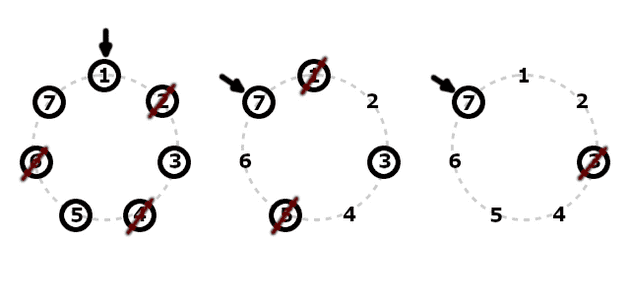

Example with seven elements:

The picture above shows three rounds that explain how we eliminate the following elements and find out the winner position.

We have seven elements to begin with, and we start from the first one. Hence, 1 lives, 2 is eliminated, and then we eliminate positions 4 and 6, accordingly. Then, it is the element 7 turn, and it will live, but we redraw the picture to make the following round clearer.

On the second circuit, we start from position 7 and count every other element out. Hence we eliminate 1, leave 3, and eliminate 5. We are then moving to the last round.

Finally, we have only two positions left, and we start with 7 again. It is pretty apparent right now that 7 lives and 3 is eliminated, so 7 is our winner.

Implementation

Let’s figure out the winning position for n elements when we start from the first position and count out every other element.

For sure, the recursive approach is quite interesting, partly because we have talked about it for two weeks now. However, it is common to find solutions for basic examples and then figure out the general pattern.

We define the winner for n elements as W(n). Right now, we know that W(7) = 7. We can also quickly draw the solution for n equals 1, 2, 3, 4, 5, 6, 7, and 8. Accordingly, the results are 1, 1, 3, 1, 3, 5, 7, and 1. I encourage you to do it on paper.

It seems that position one is quite good if we have up to 8 elements and like to win. The question that arises is how it goes for higher n. We want to implement a working program after all.

Let’s think about some n and what happens when we follow the elimination process for only one loop. If we have an even number of elements, after one loop, we end up with half of our pieces eliminated and starting again from the same position. That is vital for recursion because it allows us to calculate more advanced cases based on simpler ones.

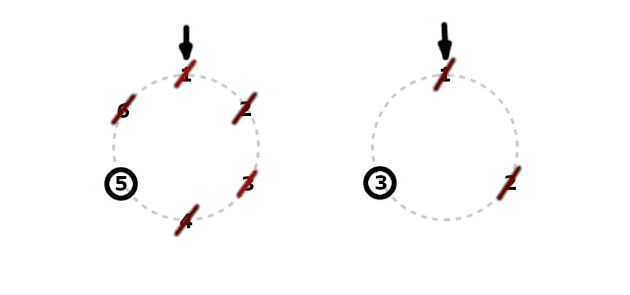

When we have half the elements and start at the same position, the winner is around the same place on the circle, but its assigned position would have a lower number according to the fewer elements. Let’s see how W(6) and W(3) compare, where the winner is in the same place but has a different assigned position:

When we want to find the W(n) and the n is even, we can look for the W(n/2) solution. Having that, we figure out what would be the corresponding place with n elements. Calculating the new position, we need to add all spaces between positions 1 and W(n/2). That is where the missing elements will fit, and it gives us W(n/2) - 1 additional elements. It turns out that the proper position mapping is:

W(n) = W(n/2) + W(n/2) - 1 = 2 W(n/2) - 1That’s why:

W(6) = 2 W(3) - 1 = 2 * 3 - 1 = 6 - 1 = 5What about the odd number n? The first circuit looks similarly, but at the beginning of the second loop, the first position gets eliminated simply because the last odd position n survives.

After the elimination of 1 at the beginning of the second round, we encounter the two-position shift, and thus we start from position 3 (position 2 was eliminated before). A number of elements is now (n-1) / 2 and we discuss W((n-1)/2) solution as our base.

Calculation of the position W(n) requires adding the W((n-1)/2) to the number of spaces between 3 and the winner. We also need to add those two shifted positions. It looks like this:

W(n) = W((n-1)/2) + W((n-1)/2) - 1 + 2 = 2 W((n-1)/2) + 1That’s why:

W(7) = 2 W(3) + 1 = 2 * 3 + 1 = 6 + 1 = 7Right now, we can display our recursion in full:

W(1) = 1

W(n) = 2 W(n/2) - 1 // for even n

W(n) = 2 W((n-1)/2) + 1 // for odd nThis is perfect for our implementation:

let winner = n => {

if (n === 1) {

return 1

}

return n%2 === 0 ? 2*winner(n/2) - 1 : 2*winner((n-1)/2) + 1;

}Still, if you really like math and recursive equations, you may prefer to write the recursion like this:

W(1) = 1

W(2n) = 2 W(n) - 1

W(2n + 1) = 2 W(n) + 1Which is the same but implicitly handles the parity of n.

Optimization

As you probably know, the best way to optimize the algorithm of calculation of a recursive equation is to solve it and write it down in a closed form. That usually provides faster computations.

There are many approaches to finding the solution, but I suggest focusing on when the given position wins. For instance, it is evident that even-number positions never win. They are eliminated during the first loop. However, it seems that the first position regularly wins with growing n.

From the W(2n) = 2 W(n) - 1, we can say that if W(n) equals 1, then we have:

W(2n) = 2 * 1 + 1 = 1So, every time we multiply n by two, and the former number of elements gave us 1 as a winner, it also wins the multiplied game. That’s why the winner for 1, 2, 4, and 8 is always 1. It is valid for all n = 2^m.

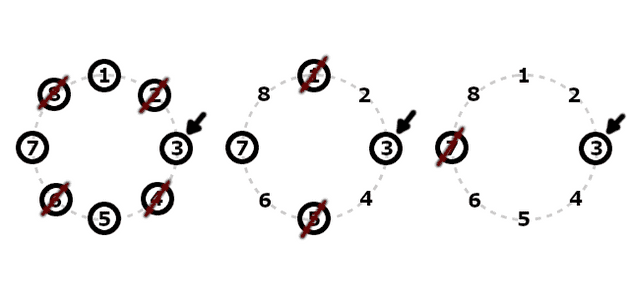

When is the winner 3? How does it correspond to winning as 1? Well, when we start from 3 and have exactly 2^m elements, we can be sure that 3 will be the winner. It is a simple shift around the circle. It only matters where we start. Below is the solution for 8 elements starting from position number 3:

It is a general feature, because we can label elements however we like, and the key is the number of elements in the power of 2. Starting anywhere and having 2^m elements makes the place of the start the winner.

How do we start from a given odd position with 2^m elements left? Well, we may begin from position 1 eliminate l extra elements over the closest 2^m, and end up with exactly 2^m, which quickly determines our winner. When we eliminate l initial elements, we resume at position 2l + 1. That’s because we start from 1, and eliminate every other element.

That gives us the idea that if we want to start from position 3 with 2^m elements, we need to eliminate only one initial element, l = 1, because 2*l + 1 = 3. It is equivalent to having 2^m + l elements and starting at 1.

That is crucial for our further calculations. We can eliminate l initial elements, so we have exactly n elements that are the power of 2. That’s why for n=5:

W(5) = W(2^2 + 1) // where: n = 5, m = 2, l = 1

W(5) [start at 1] = W(4) [start at 3] = 3The whole discussion concludes that when we write the number of elements n as a sum of 2^m and l, with maximal m and minimal l >= 0, we can say that after eliminating the first l elements and resuming at the 2l + 1 position, we are left with only 2^m elements, so our 2l + 1 position will be the winner.

So the winner is:

W(n) = W(2^m + l) = 2l + 1 // where n = 2^m + l, m >= 0, 0 <= l < 2^mHow do we find m and l when n = 2^m + l? In this case, the base 2 logarithm comes to the rescue.

Calculating log2 n should give us the maximal m. Of course, our m should be a natural number, so we skip the fraction. The final non-recursive algorithm goes like this:

let winner = n => {

let m = Math.floor(Math.log2(n))

let l = n - 2**m

return 2*l + 1

}That implementation is more efficient than the recursive one. However, this time the mathematical induction proof for W(2^m + l) = 2l + 1 is slightly different.

Let’s see. We will look at the variable m.

n = 2^m + lBase scenario m=0 works well:

W(1) = W(2^0 + 0) = 2*0 + 1 = 1We assume that it works for m:

W(2^m + l) = 2l + 1And we need to prove two recursive cases for even and odd numbers:

W(2n) = 2 W(n) - 1

W(2n + 1) = 2 W(n) + 1Case W(2n) = 2 W(n) - 1, and proof for m+1:

W(2^(m+1) + l) = W(2 * 2^m + 2 * (l/2)) = W(2 (2^m + (l/2)) =

= 2 W(2^m + (l/2)) - 1 = 2 (2*(l/2) + 1) - 1 = 2 (l + 1) - 1 = 2l + 2 - 1 = 2l + 1Case W(2n + 1) = 2 W(n) + 1, and proof for m+1:

W(2^(m+1) + 1) = W(2^(m+1) + l-1+1) = W(2 (2^m + (l-1)/2) + 1) =

= 2 W(2^m + (l-1)/2) + 1 = 2 (2*(l-1)/2 + 1) + 1 = 2 (l-1 + 1) + 1 = 2l + 1We proved that the statement holds for m+1, because we get 2l + 1 for odd and even numbers.

Conclusion

Today, we discussed the Josephus problem that is a counting-out game. In our version, we assumed that elimination affects every other element. However, this is a variable that we can adjust to expand the puzzle in the general situation. For instance, we may eliminate every third element or start from a different position.

All in all, the important part is that we can approach that kind of problem from two directions, and both of them may direct us toward the solution.

First is the recognition of recursive equations and applying them to both even and odd numbers. The difficulty here is to correctly calculate the complex solution based on the simple one.

On the other hand, during the optimization phase, we recognize that every odd position may be a winner when specific conditions are applied. Having that, we only need to find a way from the general case into those conditions.

I hope you enjoyed this mathematical and algorithmic endeavor. Cheers!